More philosophy for children

The P4C trial security rating

Last week I criticised a trial, which the authors claimed had shown that a programme of philosophy teaching (P4C) in primary schools improved pupils literacy and maths (click here). The organisation which ran the trial, a semi-independent largely government-funded charity, the Education Endowment Foundation (EEF) has now defended their work (click here), without responding to any of the substantive issues, namely imbalance at baseline, negative primary results, >50% attrition on one primary endpoint and inappropriate cherry picking among data-driven secondary endpoints. Instead they defend a side issue, the lead researcher Stephen Gorard’s failure to report statistical significance. They also insist that the trial was evaluated independently using the EEF’s padlock rating scheme. With three padlocks out of five awarded by the EEF evaluators, that scheme is supposed to indicate that the results have a moderate degree of security.

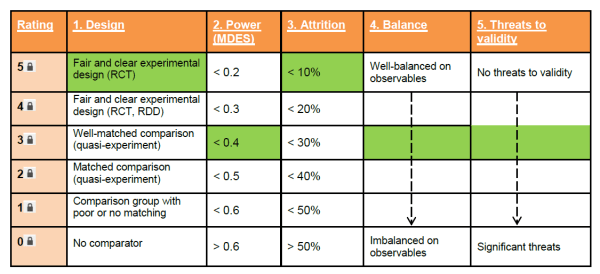

The padlock scheme is described here. The criteria, as described by the EEF, are as follows.

1. Design: The quality of the design used to create a comparison group of pupils with which to determine an unbiased measure of the impact on attainment.

2. Power: The minimum detectable effect that the trial was powered to achieve at randomisation, which is heavily influenced by sample size. Implementation (thresholds that could be over-ridden by criteria 4, ‘Balance’, in exceptional circumstances)

3. Attrition: The level of overall drop-out from the evaluation treatment and control groups, which could potentially bias the findings. Analysis and interpretation (judgement required)

4. Balance: The final amount of balance achieved at the baseline on observable characteristics in the primary analysis.

5. Threats to validity: How well-defined and consistently delivered the intervention was, and whether the findings could be explained by anything other than the intervention.

The final padlock rating is derived by rating design, power and attrition, taking the lowest, and adjusting it up or down according to the presence or absence of balance at baseline and other threats to validity. The final rating cannot be higher than the lowest rating for design or power.

The EEF evaluator’s rating is given in appendix 2 of the main P4C trial report available here. I’ve reproduced the relevant table here:

Their justification was as follows:

“This evaluation was designed as a randomised controlled trial. The sample size was designed to detect a MDES of less than 0.4, by design, reducing the security rating to 3 . At the unit of

randomisation (school), there was zero attrition, and extremely low attrition at the pupil level also. The post-tests were administered by the schools by teachers who were aware of the treatment allocation, but with invigilation from the independent evaluators. Balance at baseline was high, and there were no substantial threats to validity.”

Let’s review these judgments.

Design

A cluster randomized trial with 48 schools and 3,159 pupils. Five padlocks is correct.

Power

I don’t know how to judge this without significance testing. But it’s a pretty large trial, albeit one which will lose some power from the cluster design. The EEF evaluators rated it three padlocks, which seems reasonable.

Attrition

The EEF evaluators judged attrition at the cluster level only, which is correct according to the EEF guide, because that was the level at which randomisation occurred. All randomised schools were followed up, so they rated 5 padlocks on this criterion.

The evaluators noted that pupil level attrition was very low, which suggests they somehow missed the 52% attrition on KS2 score. But unless they had reduced the attrition rating to less than three padlocks this would not alter the final rating. The reason is that at this point the evaluator is supposed to allocate an interim padlock rating based on the lowest of the above three marks. The EEF guide reads:

“At this point the overall security rating for the evaluation will be determined by the minimum rating across the above three criteria. The minimum of first two criteria (planned design and power) determine the maximum security rating for the evaluation.”

So, however we interpret attrition, the overall security rating at this stage is three padlocks.

The EEF guide then says that this interim rating should be adjusted up or down depending on the final two criteria, balance and other threats to validity.

Balance

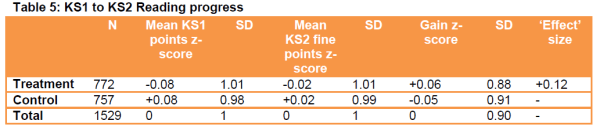

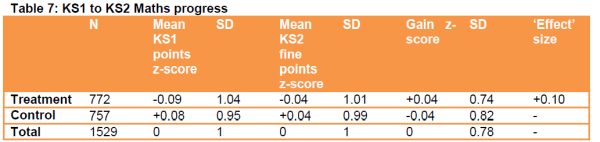

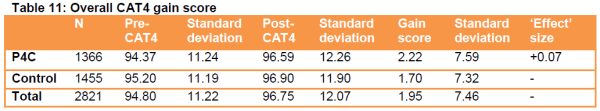

The evaluators judged that the groups were well balanced at baseline, on the basis of table 4 (baseline characteristics) in the report. But they ignored, or did not notice, the baseline imbalance of nearly 0.2 SD in KS1 reading and mathematics (total KS1 baseline scores are not reported) and of between 0.05 and 0.1 SD in CAT score. We can forgive the evaluators because these imbalances are not reported in table 4. They appear in the third columns of tables 5, 7 and 11 in the main report.

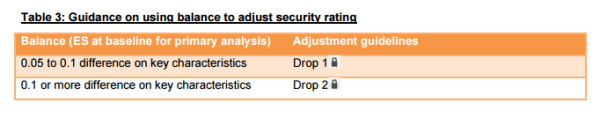

Since the Key Stage (KS) and Cognitive Ability Test (CAT) scores are the trial’s two primary outcomes, the evaluators made a mistake here, albeit a forgiveable one. The EEF guide (Table 3 below) suggests that in the presence of a baseline difference of >0.1 on a key characteristic the evaluators should drop two padlocks.

Three minus two = one. The interim rating should now be one padlock.

Other threats to validity

The EEF guide lists six other potential threats, namely 1. Insufficient description of the intervention, 2. Diffusion (or ‘contamination’), 3. Compensation rivalry or resentful demoralisation, 4. Evaluator or developer bias, 5. Testing bias, and 6. Selection bias. It suggests that adjustment should be made as follows:

“If any of the above issues are identified as a cause for concern some judgement should be used in adjusting the security rating to account for any issues identified. The following are some suggested rules:

- If there is evidence of any one or two threats the rating should drop 1 padlock

- If there is evidence of more than two threats the rating should drop 2 padlocks”

The EEF evaluators did not detect any threats. But in my opinion there are two unambiguous ones. No 1 because it was not made clear what teaching the control pupils got during the P4C lessons, and no 6 because the outcomes on which the conclusions were based were change scores selected post hoc, and susceptible to regression to the mean. A critical reviewer might also argue that the selective choice of outcomes suggests evaluator bias (threat 4). But this seems a bit circular so I’m giving them the benefit of the doubt on that.

Even if the teaching that control pupils got was recorded somewhere else, the problem of selecting change scores post hoc, and their susceptibilty to regression to the mean, is a definite threat to validity. So at best the final rating should drop by a further padlock. One minus one = zero. A final padlock rating of zero out of five.

According to the EEF zero padlocks mean the P4C trial “adds little or nothing to the evidence base”.

I’d be delighted to learn if I’ve made a mistake in the above. If not the EEF may wish to look for new evaluators.

Jim Thornton

Camilla Nevill from EEF emailed me. Apparently it was only ever intended to evaluate one year group on the KS2 because the other year group would’ve been contaminated. KS2 attrition was therefore nearer to zero. This raises the attrition padlock score to five but won’t alter the final score, because that is arrived at by adjusting from the lowest of the first three scores. We had to agree to differ about the significance of the baseline imbalance and altered primary outcomes, and the importance of regression to the mean. As Camilla notes, some of the scoring is subjective.

Although I remain of the opinion that this trial provides, at best, weak evidence that P4C has no effect on reading or maths, I’m impressed at EEF’s receptiveness to this sort of criticism from outsiders like me.